Beyond Giants and Gatekeepers: Why Trust Must Be the New Competitive Axis

As AI reshapes competition, scale is no longer enough — the next era of digital innovation will be defined by the quality and portability of trust across humans, agents, and systems.

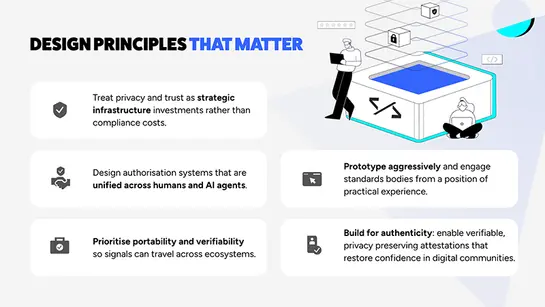

Affinidi’s participation at the Summit on Human Agency sharpened a central argument: the next era of digital innovation will be decided not by scale alone but by the quality and portability of trust. Enterprises and builders must move from treating privacy and consent as compliance chores to designing them as foundational infrastructure that enables human authority to scale alongside AI.

Trust as Architecture, Not Afterthought

The conversation at the summit made one thing clear: trust cannot be bolted on after a product ships. When consent, provenance, and authorisation are engineered as native primitives, systems behave differently. The data flows more freely, collaborations become possible across organisational boundaries, and users retain meaningful control. Affinidi’s position is that decentralised, first-person collected trust graphs are the practical architecture for this shift. These graphs let humans, AI agents, devices, and transactions participate in the same authorisation fabric so that intent and permission travel with the user rather than being trapped inside a single vendor’s silo.

Designing trust as infrastructure changes product decisions. It alters how credentials are modelled, how consent flows are presented, and how APIs are structured so attestations are both machine readable and user controlled. It also reframes partnerships: interoperability and portability become strategic assets rather than optional conveniences.

One System for Humans and Agents

A defining insight that framed Affinidi’s stance at the summit is the necessity of a unified authorisation model. As our CEO Glenn Gore put it, “Creating a system for humans to authorise and a separate system for AI agents? It does not work.” That statement captures a technical and ethical imperative: humans and AI will co-orchestrate outcomes, and the architecture must reflect that hybridity.

Practically, this means treating an AI assistant, a smart building, and a human user as nodes in the same trust topology. Consent and attestations must be portable and verifiable across contexts so that an individual’s intent can be expressed once and honoured everywhere. For enterprises, the implication is profound: identity and access strategies must evolve from perimeter controls to portable, verifiable signals that travel with the user and their agents.

The Economic Case for Patience

The summit also surfaced a hard truth about incentives. Short-term capital markets and quarterly metrics rarely reward investments in privacy and long-term resilience. Glenn Gore articulated this tension plainly: “Investing in privacy and trust is not good economic judgment… You have to have patience and a long-term view.” That observation reframes trust as a multiyear investment whose returns compound over time.

The payoff for enterprises is systemic rather than immediate. Trust infrastructure reduces friction in data sharing, increases customer lifetime value, and enables participation in ecosystems that require verifiable signals. Organisations that accept longer horizons will be better positioned to capture the next wave of innovation, one that emerges when data is both more available and more trustworthy.

Gatekeepers, Approval Friction, and Structural Limits

Technical feasibility does not guarantee practical deployment. Platform gatekeeping such as approval processes, distribution controls, and opaque policy decisions remains a major barrier to innovation. Affinidi’s own experience with a mobile app that functioned technically but struggled to gain platform approval illustrated how centralised control can determine which forms of human agency scale. That friction is not merely operational; it shapes the contours of what is possible in product design and market access.

This structural reality requires a strategic pivot: competing with Amazon, Google, Apple, and OpenAI is no longer merely a contest of scale but a choice of differentiation. Affinidi’s view is that organisations should stop trying to outspend or outreach those giants and instead compete on trust, by building systems that prioritise user control, portability, and verifiable consent. Those capabilities create durable value and user loyalty that locked, centralised ecosystems struggle to replicate; the competitive battleground shifts from raw reach to the integrity, portability, and quality of the trust signals an organisation can offer.

Authenticity in an Era of Synthetic Communities

A recurring theme at the summit was authenticity. As synthetic media and fake communities proliferate, the market value of verifiable human connection rises. Glenn described this as an “inverse innovation challenge”: AI will make humans care more about privacy. Fake communities will force real communities to be better. That dynamic creates demand for systems that can prove provenance while preserving privacy.

For builders, the practical work is to design identity and reputation systems that are portable and privacy preserving. For enterprises, it is to recognise that customers will increasingly choose services that can demonstrate authenticity without exposing unnecessary personal data. Affinidi’s approach includes building primitives that enable verifiable attestations under user control. This addresses both sides of that demand, enabling real communities to reassert value in a landscape crowded with synthetic alternatives.

A Pragmatic Rhythm: Prototype, Learn, Standardise

Standards and committees matter, but they rarely lead innovation. The summit reinforced a pragmatic rhythm: prototype in the wild, learn from real usage, and then engage standards bodies from a position of practice. Affinidi’s posture is to build interoperable primitives, test them in production flows, and collaborate with standards organisations to scale what proves useful.

This build first approach accelerates learning and ensures that standards reflect lived reality rather than abstract ideals. It also reduces the risk that standards become ossified or irrelevant. When enterprises and developers lead with practical implementations, they create the evidence that standards bodies need to codify interoperable behaviours.

A Collective Mandate

The Summit on Human Agency did not produce a single blueprint. Instead, it produced a collective mandate: the technical problems are solvable, but the social and economic choices that determine which solutions scale are the harder work. Enterprises must accept longer horizons. Developers must design for orchestration rather than automation. Platform owners must reckon with the consequences of gatekeeping.

Affinidi’s commitment is to build the primitives and the argument for a future where human authority is portable, verifiable, and resilient. If the last decade was defined by making data available, the next will be defined by making data trustworthy. That shift requires a generation of attention, and a willingness to treat trust not as a constraint but as the architecture of the AI era.

Build with Affinidi

Start building trust infrastructure with our open-source tools and developer-friendly APIs.