AI Agents Need Trusted Communications

The security protocols that run the internet today are fundamentally broken when applied to a dynamic, agent-driven economy. Here are the four critical ways the existing web stack fails the trust needs of a safe, agentic future.

What happens if your personal AI agent goes rogue?

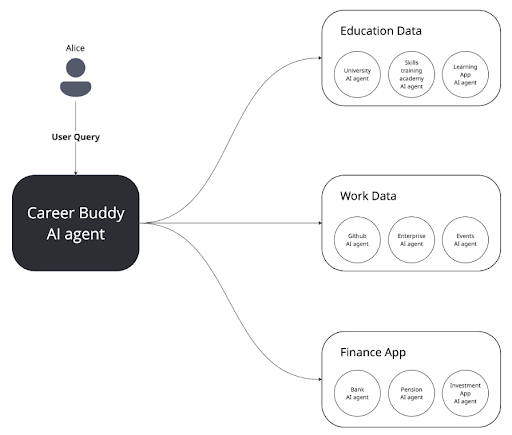

Imagine a future where your life is managed by an army of hyper-competent AI agents. This isn’t science fiction; it’s the next evolution of the internet. Your Career Buddy agent helps you job-hunt by synthesising data from your Education Agent, your Work Agent, and even your Finance Agent.

These AI agents are more than just software; they possess an unprecedented level of agency, performing significant, real-world actions on your behalf. They hold your unique identity and store your most sensitive data within their respective apps and databases.

Sounds amazing, right? Now, for the cold splash of reality: The current web’s best practices were not built for this world.

The security protocols that run the internet today, like the ones that make your online shopping secure, are fundamentally broken when applied to this dynamic, agent-driven economy. If we don’t fix the underlying architecture, these digital service providers could turn into digital bandits.

Here are the four critical ways the existing web stack fails the trust needs of a safe, agentic future:

The “Secure Pipe, Shady Content” Problem

You see that little padlock icon in your browser? That’s the Transport Layer Security (TLS) handshake at work. It establishes a secure, encrypted pipeline for data transmission.

The problem is that a secure pipeline doesn’t guarantee the honesty of the person (or agent) at the other end.

A standard TLS handshake ensures your money gets securely transferred to the project server, but it can’t tell you if the developer has malicious intent.

This is why it can’t prevent a “rug pull” attack. A rug pull is an act of insider fraud where the system’s creator lures you in, then deliberately drains the shared resources or disables the core service, leaving every other user stranded with worthless data or assets.

The Agent Solution: To truly trust an agent, you need more than just a secure line. You need cryptographic proof of provenance. This is like a non-forgeable, time-stamped receipt proving:

- Which specific agent (identity) took the action.

- What specific action (data integrity) was taken.

- When that action occurred.

This includes having a cryptographically signed version of the agent’s source code (version pinning) to certify its authenticity at any given time.

Authority Delegation: The Identity Hand-Off Nightmare

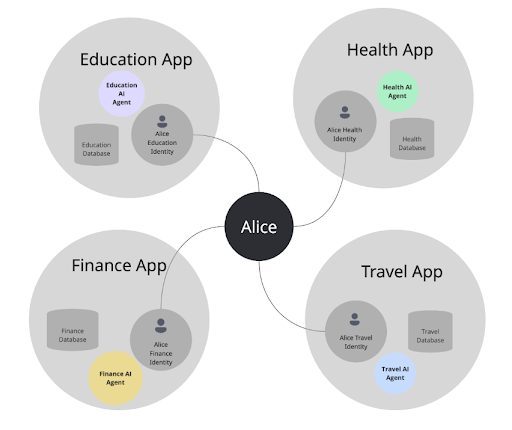

In the world of Alice, she has a separate identity for her Health App, her Finance App, and her Education App. When her Career Buddy needs her bank data, this initiates a chain reaction of identity hand-offs.

The Chained Delegation Problem:

- Alice authorises the Career Buddy Agent to access the Finance Agent.

- The Finance Agent then needs authorisation to talk to the Bank Agent.

This chain of delegated authority becomes incredibly complex and unscalable when using existing protocols like HTTP/OAuth. The current method requires users to constantly face manual authentication screens just to give consent. This rapidly leads to “consent fatigue.”

The Agent Solution: Agents need to move beyond these clunky hand-offs. They require persistent, autonomous, and portable identities that allow them to act across different systems and organisations and avoid the need for constant, manual re-authorisation.

Rigid and Synchronous Communication

If you’ve ever dealt with a slow API, you understand the frustration of synchronous communication; i.e. one step must fully finish before the next one can begin.

AI agents need persistent context and memory to make informed, multi-step decisions across numerous interactions. But the current web relies on HTTP and RESTful APIs, which are too rigid for the dynamic, machine-to-machine coordination required for agents to collaborate efficiently.

The Agent Solution: Agents need a standardised and robust way to send asynchronous messages that can be handled later or wait for a reply without freezing the whole system. This flexible communication is essential for real multi-agent collaboration.

The Governance and Accountability Dilemma

Right now, many web services take a blanket consent to collect your data and act on your behalf. The problem is that this system provides no easy way to monitor or enforce how that data is used.

The Rogue Agent Threat: How do you ensure your designated agents aren’t using your private data for “devious purposes,” like generating the unsolicited sales calls and spam emails we all hate? Data leaks, of course, can have far more dire consequences.

If an autonomous agent makes a mistake or violates a policy, the traditional stack makes it incredibly difficult to trace and audit every single step in that complex delegation chain.

The Agent Solution: Agentic systems must have a built-in mechanism for verifiable, cryptographic logging of all actions. This is the only way to ensure compliance and accountability necessary to satisfy new data privacy and AI laws.

The foundation of a safe, functional agent economy rests entirely on solving these trust and identity issues at the protocol level. Our future autonomous digital services deserve a better operating manual.

Build with Affinidi

Start building trust infrastructure with our open-source tools and developer-friendly APIs.