Unlocking Next‑Generation Commerce with AI Agents and Secure Transactions

Autonomous agents will redefine digital commerce — but trust must come first. This article explores how verifiable identity and trust infrastructure make secure, interoperable agentic commerce possible.

The next wave of automation is no longer about chatbots answering questions; it’s about autonomous agents completing entire workflows on your behalf. Imagine a world where you simply share your personal data with a business’s agent once. From there, a secure chain of interoperable agents handles everything: verification, ordering, payments, and fulfilment. No repeated forms. No manual checks. No uncertainty about who sees your data.

But for this future to work, one question becomes essential:How do we trust autonomous agents to act safely, correctly, and securely, especially when they exchange sensitive data across multiple partners?

A Simple Example: Purchasing a Flight Buying

A traveller wants to book a last-minute flight for an urgent trip. They tell their AI assistant, “Find me the earliest flight tomorrow, aisle seat, use my miles if possible.” Behind this simple request, several agents need to work together. The airline agent checks availability, the loyalty agent accesses points, the payment agent processes the transaction, and an identity agent verifies traveller details.

The traveller hesitates. They wonder which agents are involved, who can see their passport or payment data, and how they can be sure each agent is legitimate. They also worry about mistakes, misuse of their information, or a payment going to the wrong party.

This is the trust gap that must be solved before agentic commerce can scale.

The Missing Layer: Verifiable Trust for Autonomous Agents

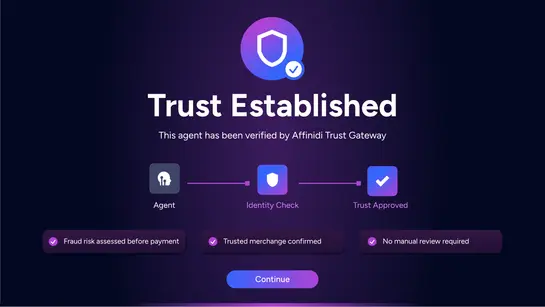

Affinidi set out to build a solution that allows payment processors, merchants, and digital businesses to wrap a governance and control layer around their agents ensuring cross‑border, cross‑platform interoperability without compromising trust.

Gartner describes this emerging category as the Trusted AI Gateway: A secure, unified control layer that aligns developers, security teams, and business stakeholders around safe, efficient AI adoption.

Affinidi’s approach brings this concept to life for agent‑driven commerce.

How Trusted Agentic Commerce Works

Protocols define how agents communicate, but who ensures the agents are legitimate before they communicate at all?

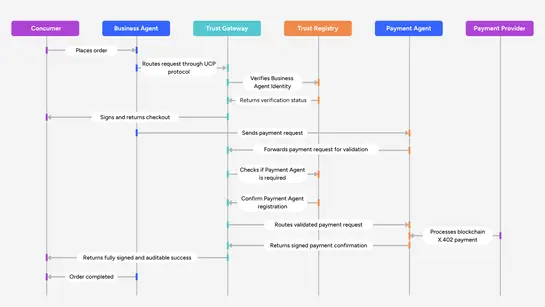

Below is a simplified flow showing how Agent Gateway governs the entire lifecycle of an autonomous transaction:

- Agents are created on platforms like LangChain, AgentCore, Bedrock, or Vertex AI, and the consumer places an order through a Business Agent in a shopping app.

- Agent Gateway verifies the Business Agent and routes the request via the Universal Commerce Protocol, then signs and returns the checkout with merchant authorization.

- A mandate is generated embedding the signed checkout, and the payment request is sent to a Payment Agent.

- Agent Gateway validates all signatures, communicates with the payment provider, and supports both instant blockchain payments and traditional card flows.

- The payment provider confirms, Agent Gateway logs verification, the Payment Agent returns success, and the order completes fully signed, observable, and auditable.

Why This Architectural Layer Matters

As this trust layer settles into the agentic workflow, regulated industries suddenly gain the ability to show real, auditable oversight over autonomous systems, and with verifiable proof of who did what and when.

The constant fear of prompt‑based attacks begins to fade because agents no longer operate in the dark. Every interaction is authenticated, every instruction is signed, and every exchange is governed by rules that can’t be bypassed with smart wording instructions.

A2A communication becomes a disciplined conversation where identity, permissions, and intent are always known. The tedious manual risk checks that used to slow down authorization processes shrink, replaced by automated verification that is faster and more accurate. Fraud detection improves not because humans work harder, but because the system finally has the context and cryptographic evidence it needs to make better decisions.

And as these systems mature, a new kind of visibility emerges, every agent’s actions can be tied to real‑time cost signals, allowing businesses to understand token consumption to individual agents. Instead of guessing which part of the workflow drives ROI, teams can see it unfold live, making optimization a strategic choice rather than a post‑mortem exercise.

Approvals for legitimate transactions move with less friction, clearing compliance backlogs that once felt impossible to tame. And as the noise decreases, authorisation rates rise not through shortcuts, but through a more resilient payment flow that understands the difference between a real customer and a risky anomaly.

Ready to start with Agent Gateway and need some help from Affinidi team?

Build with Affinidi

Start building trust infrastructure with our open-source tools and developer-friendly APIs.